JANUARY/31/2014 UPDATES:

Neanderthal Genes Linked to Human Health

http://online.wsj.com/news/articles/SB10001424052702303743604579350653841134542

Attitudes about Aging: A Global Perspective.

In a Rapidly Graying World, Japanese Are Worried, Americans Aren’t

http://www.pewglobal.org/2014/01/30/attitudes-about-aging-a-global-perspective/

27 Dimensions! Physicists See Photons in New Light

http://news.yahoo.com/27-dimensions-physicists-see-photons-light-115226866.html

Scientists use fruit flies to detect cancer

http://www.gizmag.com/fruit-flies-detect-cancer/30665/

Physicists Create Synthetic Magnetic Particle

http://scitechdaily.com/physicists-create-synthetic-magnetic-particle/

Timeline of the far future

http://www.bbc.com/future/story/20140105-timeline-of-the-far-future

World risks deflationary shock as BRICS puncture credit bubbles

http://www.telegraph.co.uk/finance/comment/ambroseevans_pritchard/10605957/World-risks-deflationary-shock-as-BRICS-puncture-credit-bubbles.html

Swarms of drones could be the next frontier in emergency response

http://www.nbcnews.com/technology/swarms-drones-could-be-next-frontier-emergency-response-2D11988741

Can People Survive Without Smartphones? [Statistics]

http://mobidev.biz/blog/can_people_survive_without_smartphones_%5Bstatistics%5D.html#sthash.wjccWu8B.dpuf

Will flying cars ever take off?

http://www.bbc.com/future/story/20140126-will-flying-cars-ever-take-off

20YY: Preparing for War in the Robotic Age

http://www.cnas.org/20YY-Preparing-War-in-Robotic-Age#.UuruiLTSmHc

‘Robot Land’ – South Korea’s robot theme park to open in 2015

http://www.impactlab.net/2014/01/29/robot-land-south-koreas-robot-theme-park-to-open-in-2015/

Edison supercomputer electrifies scientific computing

http://phys.org/news/2014-01-edison-supercomputer-electrifies-scientific.html

There Is No More B2B or B2C: There Is Only Human to Human (H2H)

http://socialmediatoday.com/bryan-kramer/2115561/there-no-more-b2b-or-b2c-it-s-human-human-h2h

[2014 trends] The changing landscape of design

http://www.bizcommunity.com/Article/196/424/107945.html

No Longer Science Fiction: Start-Up Unveils Radical Consciousness Technology

http://www.huffingtonpost.com/gregory-weinkauf/no-longer-science-fiction_1_b_4688935.html

West African Inventor Makes a $100 3D Printer From E-Waste

http://inhabitat.com/west-african-inventor-makes-a-100-3d-printer-from-e-waste/

U.N. sounds alarm on worsening global income disparities

http://www.reuters.com/article/2014/01/29/us-global-economy-un-idUSBREA0S1FD20140129

DAILY QUOTE: Robert Theobald reasons: “...Our future is determined by the actions of all of us alive today. Our choices determine our destiny...”

RECOMMENDED BOOK:

The Singularity Is Near: When Humans Transcend Biology by Ray Kurzweil

ISBN-13: 978-0143037880

Best Regards,

Andres Agostini, Group Administrator

www.ThisSuccess.wordprocessor.com

www.xeeme.com/AAgostini

(¯`*• Global Source and/or more resources at http://goo.gl/zvSV7 │ www.Future-Observatory.blogspot.com and on LinkeIn Group's "Becoming Aware of the Futures" at http://goo.gl/8qKBbK │ @SciCzar │ Point of Contact: www.linkedin.com/in/AndresAgostini

Friday, January 31, 2014

Posted by http://goo.gl/JujXk

Dr. Andres Agostini → AMAZON.com/author/agostini → LINKEDIN.com/in/andresagostini → AGO26.blogspot.com → appearoo.com/AAGOSTINI

at

1/31/2014 01:24:00 AM

Thursday, January 30, 2014

Read it here or at the Lifeboat Foundation's blog at http://lifeboat.com/blog/2014/01/future-observatory

JANUARY/30/2014's HEADLINES:

Cancer Researchers Identify New Drug to

Inhibit Breast Cancer

http://guardianlv.com/2014/01/cancer-researchers-identify-new-drug-to-inhibit-breast-cancer/

Russia, US to join forces against space

threats

http://voiceofrussia.com/news/2014_01_29/Russia-US-to-join-forces-against-space-threats-1145/

The rise of artificial intelligence

http://www.brisbanetimes.com.au/digital-life/digital-life-news/the-rise-of-artificial-intelligence-20140122-317g3.html

MIT and Harvard release working papers

on open online courses

http://www.kurzweilai.net/mit-and-harvard-release-working-papers-on-open-online-courses?utm_source=KurzweilAI+Weekly+Newsletter&utm_campaign=cbb093c38b-UA-946742-1&utm_medium=email&utm_term=0_147a5a48c1-cbb093c38b-282009765

13 Quotes That Show Why Libertarian

Tech Billionaire Peter Thiel Is A Scary Genius

http://www.businessinsider.com/peter-thiel-quotes-2014-1?op=1#ixzz2rr4DD75T

Quantum cloud simulates magnetic

monopole

http://www.nature.com/news/quantum-cloud-simulates-magnetic-monopole-1.14612

North Korea possesses two-thirds of the

world’s rare earths

http://www.impactlab.net/2014/01/24/north-korea-possesses-two-thirds-of-the-worlds-rare-earths/

Recent discovery of quantum vibrations

in microtubules inside brain neurons corroborates controversial

theory of consciousness

http://www.impactlab.net/2014/01/21/recent-discovery-of-quantum-vibrations-in-microtubules-inside-brain-neurons-corroborates-controversial-theory-of-consciousness/

The internet population in China hit

618 million in 2013 with 81% connected via mobile internet

http://www.impactlab.net/2014/01/20/the-internet-population-in-china-hit-618-million-in-2013-with-81-connected-via-mobile-internet/

The Bitcoin ATM Has a Dirty Secret: It

Needs a Chaperone

http://www.wired.com/wiredenterprise/2014/01/bitcoin_atm/

Can Science Save Modern Art?

http://www.fastcodesign.com/3025595/asides/can-science-save-modern-art

Welcome to the 2020s (Future Timeline

Events 2020-2029)

http://www.youtube.com/watch?v=1Lmd3l0W5JI

2070-2079 timeline

http://www.futuretimeline.net/21stcentury/2070-2079.htm

Lemur Studio Design develops mine

detector in a shoe

http://www.gizmag.com/lemur-studio-saveonelife/30569/

CERN experiment produces first beam of

antihydrogen atoms for hyperfine study

http://phys.org/news/2014-01-cern-antihydrogen-atoms-hyperfine.html

Architects build the jellyfish house

around a floating pool

http://www.designboom.com/architecture/wiel-arets-architects-build-the-jellyfish-house-around-a-floating-pool-1-26-2014/

Monitoring Drugs Flowing in the

Bloodstream

http://www.mdtmag.com/news/2014/01/monitoring-drugs-flowing-bloodstream

$1.7 million personal submarine lets

you 'fly' underwater

http://www.cbsnews.com/news/17-million-personal-submarine-lets-you-fly-underwater/

Computing with silicon neurons:

Scientists use artificial nerve cells to classify different types of

data

http://www.sciencedaily.com/releases/2014/01/140128094539.htm

10 technology trends to watch in 2014

http://www.cbsnews.com/news/10-technology-trends-to-watch-2014/

The year ahead: Hot ICT tech trends in

2014

http://www.marsdd.com/2014/01/28/year-ahead-hot-ict-tech-trends-2014/

5 Futuristic Trends That Will Shape

Business And Culture Today

http://www.fastcoexist.com/3025012/futurist-forum/5-futuristic-trends-that-will-shape-business-and-culture-today

Stephen Hawking says there is no such

thing as black holes, Einstein spinning in his grave

http://www.express.co.uk/news/science-technology/455880/Stephen-Hawking-says-there-is-no-such-thing-as-black-holes-Einstein-spinning-in-his-grave

Google’s Ray Kurzweil predicts how

the world will change

http://jimidisu.com/?p=6013

Celebrating water cooperation: Red Sea

to Dead Sea

http://www.aljazeera.com/indepth/opinion/2014/01/celebrating-water-cooperation-r-201412072619203800.html

7 Trends For 2014

http://blogs.sap.com/innovation/innovation/seven-trends-2014-01242649

8 Quick Ways to Unlock Your Creative

Potential

https://www.openforum.com/articles/8-quick-ways-to-unlock-your-creative-potential/?intlink=us-openforum-exp-mostrecent-3

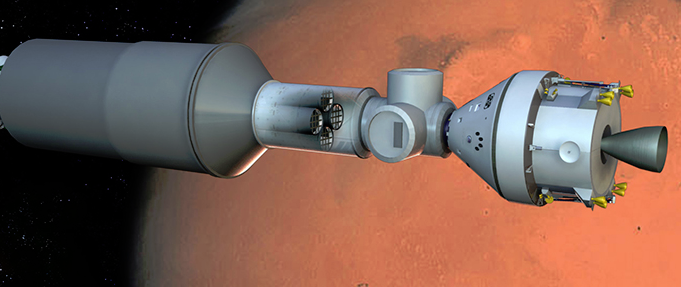

Exploring Mars Habitability –

Opportunity Updates Our Understanding of Our Planetary Neighbour

http://www.21stcentech.com/exploring-mars-habitability-opportunity-updates-understanding-planetary-neighbour/

DAILY QUOTE: By Michael Anissimov

utters, “...One of the biggest flaws in the common conception of

the future is that the future is something that happens to us, not

something we create...”

RECOMMENDED BOOK:

Radical Evolution: The Promise and

Peril of Enhancing Our Minds, Our Bodies -- and What It Means to Be

Human by Joel Garreau

ISBN-13: 978-0767915038

Best Regards,

Andres Agostini, Group Administrator

(¯`*• Global Source and/or more resources at http://goo.gl/zvSV7 │ www.Future-Observatory.blogspot.com and on LinkeIn Group's "Becoming Aware of the Futures" at http://goo.gl/8qKBbK │ @SciCzar │ Point of Contact: www.linkedin.com/in/AndresAgostini

Posted by http://goo.gl/JujXk

Dr. Andres Agostini → AMAZON.com/author/agostini → LINKEDIN.com/in/andresagostini → AGO26.blogspot.com → appearoo.com/AAGOSTINI

at

1/30/2014 01:53:00 AM

Wednesday, January 29, 2014

JAN/29/2014 HEADLINES:

Where and when the brain recognizes, categorizes an object

http://www.kurzweilai.net/where-and-when-the-brain-recognizes-categorizes-an-object

Interplanetary dust particles carry water and organics

http://www.kurzweilai.net/interplanetary-dust-particles-carry-water-and-organics

DNA-built nanoparticles target cancer tumors, deal with toxicity

http://www.kurzweilai.net/dna-built-nanoparticles-target-cancer-tumors-deal-with-toxicity

The Cities With The Best Public Transportation In The U.S.

http://www.fastcoexist.com/3025623/the-cities-with-the-best-public-transportation-in-the-us

Is 3-D Printing Better For The Environment?

http://www.fastcoexist.com/3024867/world-changing-ideas/is-3d-printing-better-for-the-environment

A Flying Lifeguard Robot Could Save You From Drowning

http://www.fastcoexist.com/3024583/world-changing-ideas/a-flying-lifeguard-robot-could-save-you-from-drowning

5 Visions For The Consumer Products Of 2030

http://www.fastcoexist.com/3025557/5-visions-for-the-consumer-products-of-2030

3D Printed Custom Insoles Made From a Ten Second Video Of Your Foot

http://gizmodo.com/3d-printed-custom-insoles-made-from-a-ten-second-video-1511602753

How Bioelectronics Promise A Future Cure For Cancer http://gizmodo.com/how-bioelectronics-will-cure-cancer-1510812961

This Insanely Loud Sound System Simulates the Roar of a Rocket Launch

http://gizmodo.com/this-insanely-loud-sound-system-simulates-the-roar-of-a-1511519477

The Class Of 2014: The New Mayors Who Are Building The Future Of America's Cities

http://www.fastcoexist.com/3024718/the-class-of-2014-5-new-mayors-who-are-building-the-future-of-americas-cities

8 Amazing Getaways For Architecture Buffs

http://www.fastcodesign.com/3025578/8-amazing-getaways-for-architecture-buffs

7 Rules For Selling In A World That Has Enough Stuff

http://www.fastcocreate.com/3025044/7-rules-for-selling-stuff-in-a-world-that-has-enough-stuff

The New Object-Tracking Craze Ensures You'll Never Lose Anything Again

http://www.fastcolabs.com/3025653/the-new-object-tracking-craze-ensures-youll-never-lose-anything-again

A Holodeck Videogame Designed to Train Soldiers

http://www.wired.com/dangerroom/2014/01/holodeck/

This space fly proves that humans aren't cut for interplanetary travel

http://sploid.gizmodo.com/this-space-fly-can-be-proof-that-humans-may-not-be-cut-1511603945/@ericlimer

Scientists Use Acid to Turn Blood Cells into Stem Cells in 30 Minutes

http://gizmodo.com/scientists-use-acid-to-turn-blood-cells-into-stem-cells-1511506552

23 of the Biggest Machines Ever Moved On Wheels

http://gizmodo.com/23-of-the-biggest-machines-ever-moved-by-man-1509734169

Scientists Digitize Psychology’s Most Famous Brain

http://www.wired.com/wiredscience/2014/01/hm-brain-closeup/

The Next Big Thing You Missed: A Tiny Startup’s Plot to Beat Google at Big Data

http://www.wired.com/business/2014/01/keen/

By 2030 U.S. Army will replace 25% of soldiers with robots

http://www.impactlab.net/2014/01/29/by-2030-u-s-army-will-replace-25-of-soldiers-with-robots/

Jade Rabbit Goes Quiet on the Moon

http://www.21stcentech.com/headlines-jade-rabbit-quiet-moon/

Group Administrator, Andres Agostini – www.xeeme.com/AAgostini

(¯`*• Global Source and/or more resources at http://goo.gl/zvSV7 │ www.Future-Observatory.blogspot.com and on LinkeIn Group's "Becoming Aware of the Futures" at http://goo.gl/8qKBbK │ @SciCzar │ Point of Contact: www.linkedin.com/in/AndresAgostini

Where and when the brain recognizes, categorizes an object

http://www.kurzweilai.net/where-and-when-the-brain-recognizes-categorizes-an-object

Interplanetary dust particles carry water and organics

http://www.kurzweilai.net/interplanetary-dust-particles-carry-water-and-organics

DNA-built nanoparticles target cancer tumors, deal with toxicity

http://www.kurzweilai.net/dna-built-nanoparticles-target-cancer-tumors-deal-with-toxicity

The Cities With The Best Public Transportation In The U.S.

http://www.fastcoexist.com/3025623/the-cities-with-the-best-public-transportation-in-the-us

Is 3-D Printing Better For The Environment?

http://www.fastcoexist.com/3024867/world-changing-ideas/is-3d-printing-better-for-the-environment

A Flying Lifeguard Robot Could Save You From Drowning

http://www.fastcoexist.com/3024583/world-changing-ideas/a-flying-lifeguard-robot-could-save-you-from-drowning

5 Visions For The Consumer Products Of 2030

http://www.fastcoexist.com/3025557/5-visions-for-the-consumer-products-of-2030

3D Printed Custom Insoles Made From a Ten Second Video Of Your Foot

http://gizmodo.com/3d-printed-custom-insoles-made-from-a-ten-second-video-1511602753

How Bioelectronics Promise A Future Cure For Cancer http://gizmodo.com/how-bioelectronics-will-cure-cancer-1510812961

This Insanely Loud Sound System Simulates the Roar of a Rocket Launch

http://gizmodo.com/this-insanely-loud-sound-system-simulates-the-roar-of-a-1511519477

The Class Of 2014: The New Mayors Who Are Building The Future Of America's Cities

http://www.fastcoexist.com/3024718/the-class-of-2014-5-new-mayors-who-are-building-the-future-of-americas-cities

8 Amazing Getaways For Architecture Buffs

http://www.fastcodesign.com/3025578/8-amazing-getaways-for-architecture-buffs

7 Rules For Selling In A World That Has Enough Stuff

http://www.fastcocreate.com/3025044/7-rules-for-selling-stuff-in-a-world-that-has-enough-stuff

The New Object-Tracking Craze Ensures You'll Never Lose Anything Again

http://www.fastcolabs.com/3025653/the-new-object-tracking-craze-ensures-youll-never-lose-anything-again

A Holodeck Videogame Designed to Train Soldiers

http://www.wired.com/dangerroom/2014/01/holodeck/

This space fly proves that humans aren't cut for interplanetary travel

http://sploid.gizmodo.com/this-space-fly-can-be-proof-that-humans-may-not-be-cut-1511603945/@ericlimer

Scientists Use Acid to Turn Blood Cells into Stem Cells in 30 Minutes

http://gizmodo.com/scientists-use-acid-to-turn-blood-cells-into-stem-cells-1511506552

23 of the Biggest Machines Ever Moved On Wheels

http://gizmodo.com/23-of-the-biggest-machines-ever-moved-by-man-1509734169

Scientists Digitize Psychology’s Most Famous Brain

http://www.wired.com/wiredscience/2014/01/hm-brain-closeup/

The Next Big Thing You Missed: A Tiny Startup’s Plot to Beat Google at Big Data

http://www.wired.com/business/2014/01/keen/

By 2030 U.S. Army will replace 25% of soldiers with robots

http://www.impactlab.net/2014/01/29/by-2030-u-s-army-will-replace-25-of-soldiers-with-robots/

Jade Rabbit Goes Quiet on the Moon

http://www.21stcentech.com/headlines-jade-rabbit-quiet-moon/

Group Administrator, Andres Agostini – www.xeeme.com/AAgostini

(¯`*• Global Source and/or more resources at http://goo.gl/zvSV7 │ www.Future-Observatory.blogspot.com and on LinkeIn Group's "Becoming Aware of the Futures" at http://goo.gl/8qKBbK │ @SciCzar │ Point of Contact: www.linkedin.com/in/AndresAgostini

Posted by http://goo.gl/JujXk

Dr. Andres Agostini → AMAZON.com/author/agostini → LINKEDIN.com/in/andresagostini → AGO26.blogspot.com → appearoo.com/AAGOSTINI

at

1/29/2014 04:22:00 PM

Tuesday, January 28, 2014

Where and when the brain recognizes, categorizes an object http://www.kurzweilai.net/where-and-when-the-brain-recognizes-categorizes-an-object

How the brain forms time-linked memories http://www.kurzweilai.net/how-the-brain-links-memories-in-time

Crowdsourced ‘EteRNA’ RNA designs outperform computer algorithms www.kurzweilai.net/press-release-crowdsourced-rna-designs-outperform-computer-algorithms-carnegie-mellon-and-stanford-researchers-report

Wrinkled metamaterials for controlling light and sound propagation http://www.kurzweilai.net/wrinkled-metamaterials-for-controlling-light-and-sound-propagation

Machine Learning is Progressing Faster Than You Think http://www.singularityweblog.com/geordie-rose-d-wave-quantum-computing/

Beyond the Moore's Law: Nanocomputing using nanowire tiles http://phys.org/news/2014-01-law-nanocomputing-nanowire-tiles.html

Google Buys UK Intelligence Firm DeepMind http://news.sky.com/story/1201630/google-buys-uk-intelligence-firm-deepmind

Every Technology Has Both Negative and Positive Effects! http://www.singularityweblog.com/roman-yampolskiy-leakproofing-the-singularity/

The Pope's Deathist Words against Radical Life Extension https://www.facebook.com/notes/juan-carlos-kuri-pinto/the-popes-deathist-words-against-radical-life-extension/10151949136217712

Right on target: New era of fast genetic engineering http://www.newscientist.com/article/mg22129530.900-right-on-target-new-era-of-fast-genetic-engineering.html

Programming molecular robots http://wyss.harvard.edu/viewpage/501/

There's a New Law in Physics and It Changes Everything http://www.forbes.com/sites/anthonykosner/2012/02/29/theres-a-new-law-in-physics-and-it-changes-everything/

Breakthrough allows scientists to watch how molecules morph into memories (w/ video) http://medicalxpress.com/news/2014-01-breakthrough-scientists-molecules-morph-memories.html

‘Borophene’ Might Be Joining Graphene in the 2-D Material Club http://spectrum.ieee.org/nanoclast/semiconductors/materials/borophene-might-be-joining-graphene-in-the-2d-material-club

Perching AR Drone Can Watch You Forever http://spectrum.ieee.org/automaton/robotics/aerial-robots/perching-ar-drone-can-watch-you-forever

Futuristic Concept Of A Floating Airport In London http://futuristicnews.com/futuristic-concept-of-a-floating-airport-in-london/

Ray Kurzweil: Your Robot Assistant of the Future http://futuristicnews.com/ray-kurzweil-your-robot-assistant-of-the-future/

Rehabilitation Support Robot “R-cloud” Makes Muscle Movement Visible http://futuristicnews.com/rehabilitation-support-robot-r-cloud-makes-muscle-movement-visible/

The Top 10 Personal 3D Printers http://futuristicnews.com/the-top-10-personal-3d-printers-2013/

(¯`*• Global Source and/or more resources at http://goo.gl/zvSV7 │ www.Future-Observatory.blogspot.com and on LinkeIn Group's "Becoming Aware of the Futures" at http://goo.gl/8qKBbK │ @SciCzar │ Point of Contact: www.linkedin.com/in/AndresAgostini

Posted by http://goo.gl/JujXk

Dr. Andres Agostini → AMAZON.com/author/agostini → LINKEDIN.com/in/andresagostini → AGO26.blogspot.com → appearoo.com/AAGOSTINI

at

1/28/2014 04:41:00 PM

Monday, January 27, 2014

UPDATE:

Tapping more of the sun’s energy using heat as well as light. At

http://www.kurzweilai.net/tapping-more-of-the-suns-energy-using-heat-as-well-as-light

A 3D window into living cells, no dye required. At

http://www.kurzweilai.net/a-3d-window-into-living-cells-no-dye-required

How to cool microprocessors with carbon nanotubes. At

http://www.kurzweilai.net/how-to-cool-microprocessors-with-carbon-nanotubes

Herschel telescope detects water on dwarf planet. At

http://www.kurzweilai.net/herschel-telescope-detects-water-on-dwarf-planet

Using nanodiamonds to precisely detect neural signals. At

http://www.kurzweilai.net/using-nanodiamonds-to-precisely-detect-neural-signals

15 Tech Companies That Will Define 2014 (Presentation)

http://www.commpro.biz/marketing/15-tech-companies-will-define-2014/#!

9 Ways Social Media Marketing Will Change in 2014

http://mashable.com/2014/01/27/social-media-marketing-2014/#!

Robotics, Robot Detects Landmines From Far, Far Away

http://news.discovery.com/tech/robotics/robot-detects-landmines-from-far-far-away-140123.htm

(¯`*• Global Source and/or more resources at http://goo.gl/zvSV7 │ www.Future-Observatory.blogspot.com and on LinkeIn Group's "Becoming Aware of the Futures" at http://goo.gl/8qKBbK │ @SciCzar │ Point of Contact: www.linkedin.com/in/AndresAgostini

Posted by http://goo.gl/JujXk

Dr. Andres Agostini → AMAZON.com/author/agostini → LINKEDIN.com/in/andresagostini → AGO26.blogspot.com → appearoo.com/AAGOSTINI

at

1/27/2014 03:51:00 PM

Wednesday, January 22, 2014

New transparent display system could provide wide-angle-view heads-up data

January 22, 2014

[+]

MIT researchers

have come up with a new approach to transparent displays that could have

significant advantages over existing systems for certain kinds of

applications: wide viewing angle, simplicity of manufacture, and

potentially low cost and scalability.

Photographs

showing transparent screen (left) in comparison with a regular piece of

glass (right). A laser projector projects blue MIT logo onto both the

transparent screen and regular glass; the logo shows up clearly on the

transparent screen, but not on regular glass. Three cups are placed

behind to visually assess the transparency. (Credit: C. W. Hue et

al./Nature Communications)

Transparent displays have a variety of potential applications — such as the ability to see navigation or dashboard information while looking through the windshield of a car or plane, or to project video onto a window or a pair of eyeglasses.

A number of technologies have been developed for such displays, but all have limitations.

The innovative system is described in a paper published in the journal Nature Communications, co-authored by MIT professors Marin Soljačić and John Joannopoulos, graduate student Chia Wei Hsu, and four others.

Many current “heads-up” display systems (such as Google Glass) use a mirror or beam-splitter to project an image directly into the user’s eyes, making it appear that the display is hovering in space somewhere in front of him. But such systems are extremely limited in their angle of view: The eyes must be in exactly the right position in order to see the image at all. With the new system, the image appears on the glass itself, and can be seen from a wide array of angles.

Other transparent displays use electronics directly integrated into the glass: organic light-emitting diodes for the display, and transparent electronics to control them. But such systems are complex and expensive, and their transparency is limited.

Embedded nanoparticles tuned to a specific wavelength

[+]

The secret to the new system: nanoparticles are embedded in the

transparent material. These tiny particles can be tuned to scatter (to

make the image look opaque) only certain wavelengths (colors) of light,

while letting all the rest pass right through.

A

transparent medium (such as glass) embedded with nanoparticles (with

scattering cross-section sharply peaked at wavelength λ0) is transparent

except for light at wavelength λ0 (credit: C. W. Hue et al./Nature

Communications)

That means the glass remains transparent enough to clearly see colors and shapes in the outside world through the glass display, while a single-color image is clearly visible on the glass.

To demonstrate the system, the team projected a blue image in front of a scene containing cups of several colors, all of which can clearly be seen through the projected image.

The team’s demonstration used silver nanoparticles — each about 60 nanometers across — that produce a blue image, but they say it should be possible to create full-color display images using the same technique.

The three primary colors (red, green, and blue) are enough to produce what we perceive as full-color, and each of the three colors would still show only a very narrow spectral band, allowing all other hues to pass through freely.

“The glass will look almost perfectly transparent,” Soljačić says, “because most light is not of that precise wavelength” that the nanoparticles are designed to scatter. That scattering allows the projected image to be seen in much the same way that smoke in the air can reveal the presence of a laser beam passing through it.

Heads-up windshield displays

Such displays might be used, for example, to provide heads-up windshield displays for drivers or pilots that work full-size at any viewing angle.

Soljačić says that his group’s demonstration is just a proof-of-concept, and that much work remains to optimize the performance of the system. Silver nanoparticles, which are commercially available, were selected for the initial testing because it was “something we could do very simply and cheaply,” Soljačić says. The team’s promising results, even without any attempt to optimize the materials, “gives us encouragement that you could make this work better,” he says.

The particles could be incorporated in a thin, inexpensive plastic coating applied to the glass, much as tinting is applied to car windows. This would work with commercially available laser projectors or conventional projectors that produce the specified color.

The work, which also included members of the U.S. Army Edgewood Chemical Biological Center, was supported by the Army Research Office and the National Science Foundation.

Abstract of Nature Communications paper

The ability to display graphics and texts on a transparent screen can enable many useful applications. Here we create a transparent display by projecting monochromatic images onto a transparent medium embedded with nanoparticles that selectively scatter light at the projected wavelength. We describe the optimal design of such nanoparticles, and experimentally demonstrate this concept with a blue-color transparent display made of silver nanoparticles in a polymer matrix. This approach has attractive features including simplicity, wide viewing angle, scalability to large sizes and low cost.

(¯`*• Global Source and/or more resources at http://goo.gl/zvSV7 │ www.Future-Observatory.blogspot.com and on LinkeIn Group's "Becoming Aware of the Futures" at http://goo.gl/8qKBbK │ @SciCzar │ Point of Contact: www.linkedin.com/in/AndresAgostini

Posted by http://goo.gl/JujXk

Dr. Andres Agostini → AMAZON.com/author/agostini → LINKEDIN.com/in/andresagostini → AGO26.blogspot.com → appearoo.com/AAGOSTINI

at

1/22/2014 10:45:00 AM

New patent mapping system helps find innovation pathways

January 22, 2014

[+]

What’s likely to be the “next big thing?” What might be the most

fertile areas for innovation? Where should countries and companies

invest their limited research funds? What technology areas are a

company’s competitors pursuing?

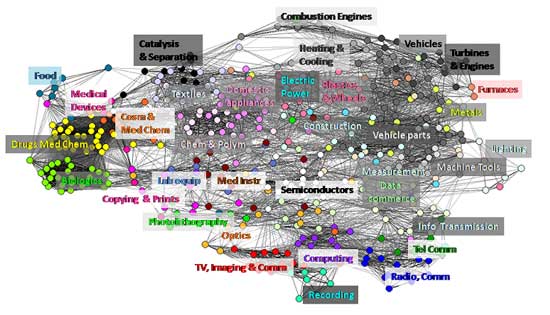

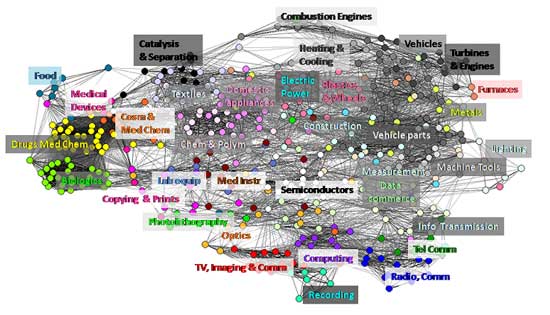

The

full Patent Overlay Mapping system patent map shows 466 technology

categories and 35 technological areas. Each node color represents a

technological area; lines represent relationships between technology

categories; labels for technological areas are placed close to the

categories with largest number of patent applications in each area

(credit: Luciano Kay)

To help answer those questions, researchers, policy-makers and R&D directors study patent maps, which provide a visual representation of where universities, companies and other organizations are protecting intellectual property produced by their research. But finding real trends in these maps can be difficult because categories with large numbers of patents — pharmaceuticals, for instance — are usually treated the same as areas with few patents.

Forecasting innovation pathways

Now, a new patent mapping system, the Patent Overlay Mapping system, considers how patents cite one another may help researchers better understand the relationships between technologies — and how they may come together to spur disruptive new areas of innovation.

“What we are trying to do is forecast innovation pathways,” said Alan Porter, professor emeritus in the School of Public Policy and the School of Industrial and Systems Engineering at the Georgia Institute of Technology and the project’s principal investigator. “We take data on research and development, such as publications and patents, and we try to elicit some intelligence to help us gain a sense for where things are headed.”

Patent maps for major corporations can show where those firms plan to diversify, or conversely, where their technological weaknesses are. Looking at a nation’s patent map might also suggest areas where R&D should be expanded to support new areas of innovation, or to fill gaps that may hinder economic growth, he said.

Innovation often occurs at the intersection of major technology sectors, noted Jan Youtie, director of policy research services in Georgia Tech’s Enterprise Innovation Institute. Studying the relationships between different areas can help suggest where the innovation is occurring and what technologies are fueling it. Patent maps can also show how certain disciplines evolve.

“You can see where the portfolio is, and how it is changing,” explained Youtie, who is also an adjunct associate professor in the Georgia Tech School of Public Policy. “In the case of nanotechnology, for example, you can see that most of the patents are in materials and physics, though over time, the number of patents in the bio-nano area is growing.”

The patent mapping research, which was supported by the National Science Foundation, will be described in a paper to be published in an upcoming issue of the Journal of the American Society for Information Science and Technology (JASIST); an open-access draft is available on arXiv.

In addition to Youtie and Porter, the research was conducted by former Georgia Tech graduate student Luciano Kay, now a postdoctoral scholar at the Center for Nanotechnology in Society at the University of California Santa Barbara.

Bypassing the International Patent Classification (IPC) system

“The goal for this research was to create a new type of global patent map that was not tied into existing patent classification systems,” Kay said. “We also wanted an approach that would classify patents into categories or clusters in a graphical representation of interrelated technologies even though they may be located in different sections and levels of the standard patent classification.”

The International Patent Classification (IPC) system is based on a hierarchy of eight top-level classes such as “human necessity” and “electricity.” Patent applications are further classified into 600 or so sub-classes beneath the top-level classes.

Critics note that the IPC brings together technologies such as drugs and hats under the “human necessity” class — technologies that are not really closely related. The system also puts technologies that are closely related — pharmaceuticals and organic chemistry, for instance — into different classes.

The new Patent Overlay Mapping system does away with this hierarchy, and instead considers the similarity between technologies by noting connections between patents — which ones are cited by other patents.

Measuring technological similarity and distance

“We completely disaggregated the patient classification system and looked at all the categories with at least a thousand patents,” Youtie explained. “We think our map gets closer to measuring the ideas of technological similarity and distance.”

Maps produced by the system provide visual information relating the distances between technologies. The maps can also highlight the density of patenting activity, showing where investments are being made. And they can show gaps where future R&D investments may be needed to provide connections between related technologies.

The researchers produced a series of patent maps by applying their new system to 760,000 patent records filed in the European Patent Office between 2000 and 2006. The data came from the PatSat database, and was analyzed using a variety of tools, including the VantagePoint software developed by Intelligent Information Services Corp. of Norcross, along with Georgia Tech.

One surprise in the work was the interdisciplinary nature of many of the 35 patent factors the researchers identified. For instance, the classification “vehicles” included six of the eight sections defined by the IPC system. Only five of the 35 factors were confined to a single section, Youtie said.

Because the researchers adopted a new classification system, other researchers wanting to follow their approach will have to use a thesaurus that translates existing IPC classes to the new system. That conversion system is available online.

The research team also included Ismael Rafols of Universitat Politecnica de Valencia in Spain and Nils Newman of Intelligent Information Services Corp.

This research was supported by the National Science Foundation (NSF) through the Center for Nanotechnology in Society at Arizona State University. The research was also undertaken in collaboration with the Center for Nanotechnology in Society, University of California Santa Barbara. The findings and observations contained in this paper are those of the authors and do not necessarily reflect the views of the NSF.

For further information: Alan Porter, School of Industrial and Systems Engineering at Georgia Institute of Technology.

Abstract of arXiv paper

This paper presents a new global patent map that represents all technological categories, and a method to locate patent data of individual organizations and technological fields on the global map. This overlay map technique may support competitive intelligence and policy decision-making. The global patent map is based on similarities in citing-to-cited relationships between categories of theInternational Patent Classification (IPC) of European Patent Office (EPO) patents from 2000 to 2006. This patent dataset, extracted from the PATSTAT database, includes 760,000 patent records in 466 IPC-based categories. We compare the global patent maps derived from this categorization to related efforts of other global patent maps. The paper overlays nanotechnology-related patenting activities of two companies and two different nanotechnology subfields on the global patent map. The exercise shows the potential of patent overlay maps to visualize technological areas and potentially support decision-making. Furthermore, this study shows that IPC categories that are similar to one another based on citing-to-cited patterns (and thus are close in the global patent map) are not necessarily in the same hierarchical IPC branch, thus revealing new relationships between technologies that are classified as pertaining to different (and sometimes distant) subject areas in the IPC scheme.

(¯`*• Global Source and/or more resources at http://goo.gl/zvSV7 │ www.Future-Observatory.blogspot.com and on LinkeIn Group's "Becoming Aware of the Futures" at http://goo.gl/8qKBbK │ @SciCzar │ Point of Contact: www.linkedin.com/in/AndresAgostini

Posted by http://goo.gl/JujXk

Dr. Andres Agostini → AMAZON.com/author/agostini → LINKEDIN.com/in/andresagostini → AGO26.blogspot.com → appearoo.com/AAGOSTINI

at

1/22/2014 10:44:00 AM

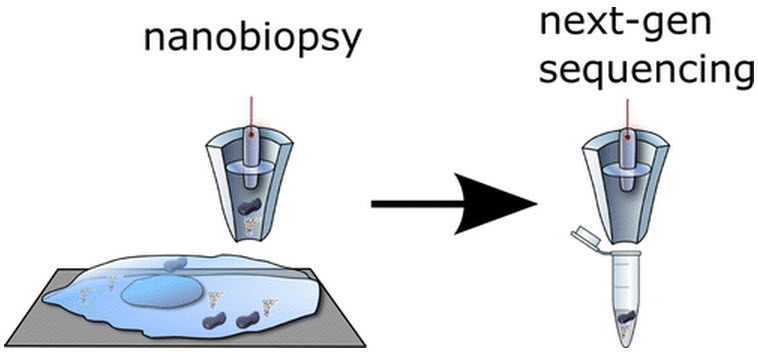

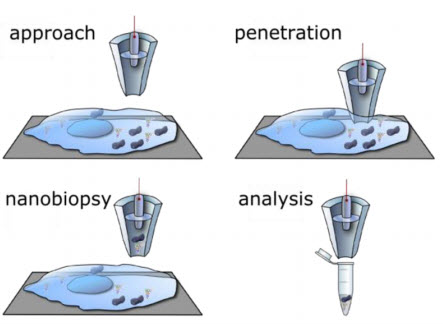

New technique allows minimally invasive ‘nanobiopsies’ of living cells

January 22, 2014

The single-cell nanobiopsy technique is a powerful tool for scientists working to understand the dynamic processes that occur within living cells, according to Nader Pourmand, professor of biomolecular engineering in UCSC’s Baskin School of Engineering.

“We can take a biopsy from a living cell and go back to the same cell multiple times over a couple of days without killing it. With other technologies, you have to sacrifice a cell to analyze it,” said Pourmand, who leads the Biosensors and Bioelectrical Technology group at UCSC.

The nanobiopsy platform is the latest device his group has developed that uses nanopipettes, which are small glass tubes that taper to a fine tip with a diameter of just 50 to 100 nanometers. “We can create nanopipettes in the lab — it doesn’t require an expensive nanofabrication facility,” Pourmand said. “To go into a cell, however, the problem is that you cannot see the tip, even with a high-end microscope, so you don’t know how far away from the cell it is.”

Adam Seger, a postdoctoral researcher in the lab (now at MagArray in Sunnyvale), solved this problem by developing a feedback control system based on a customized scanning ion conductance microscope (SICM). The system uses an ion current across the tip of the nanopipette as a feedback signal, detecting a drop in the current when the tip gets close to the cell surface.

An automated control system positions the nanopipette tip just above the cell surface and then plunges it down quickly to penetrate the cell membrane. Manipulating the voltage triggers the controlled uptake of a minute quantity of cellular material.

Minimal cell damage

Illustration

of automated approach to cell surface, penetration in the cell cytosol,

followed by controlled aspiration of cytoplasmic material by

electrowetting, and delivery of the biopsied material into a tube for

analysis. Scheme not to scale. (Credit: UC Santa Cruz/ACS Nano)

Pourmand’s group used the system to extract from living cells tiny amounts of cellular material estimated to be about 50 femtoliters (a femtoliter is one quadrillionth of a liter).

That’s about one percent of the volume of a human cell. The researchers were able to extract and sequence RNA from individual human cancer cells. They also extracted mitochondria (tiny subcellular organelles) from human fibroblasts and sequenced the mitochondrial DNA.

“Mitochondria are known to be involved in many neurodegenerative diseases. This technology can be used to shed light on the importance of mutations in the mitochondrial genome,” Pourmand said.

There are many potential uses for this technology, and Pourmand said he is eager to develop collaborations with other researchers and explore different applications. “It is a versatile platform for anyone trying to understand what is happening inside the cell, including cancer biologists, stem cell biologists, and others,” he said.

Abstract of ACS Nano paper

The ability to study the molecular biology of living single cells in heterogeneous cell populations is essential for next generation analysis of cellular circuitry and function. Here, we developed a single-cell nanobiopsy platform based on scanning ion conductance microscopy (SICM) for continuous sampling of intracellular content from individual cells. The nanobiopsy platform uses electrowetting within a nanopipette to extract cellular material from living cells with minimal disruption of the cellular milieu. We demonstrate the subcellular resolution of the nanobiopsy platform by isolating small subpopulations of mitochondria from single living cells, and quantify mutant mitochondrial genomes in those single cells with high throughput sequencing technology. These findings may provide the foundation for dynamic subcellular genomic analysis.

(¯`*• Global Source and/or more resources at http://goo.gl/zvSV7 │ www.Future-Observatory.blogspot.com and on LinkeIn Group's "Becoming Aware of the Futures" at http://goo.gl/8qKBbK │ @SciCzar │ Point of Contact: www.linkedin.com/in/AndresAgostini

Posted by http://goo.gl/JujXk

Dr. Andres Agostini → AMAZON.com/author/agostini → LINKEDIN.com/in/andresagostini → AGO26.blogspot.com → appearoo.com/AAGOSTINI

at

1/22/2014 10:44:00 AM

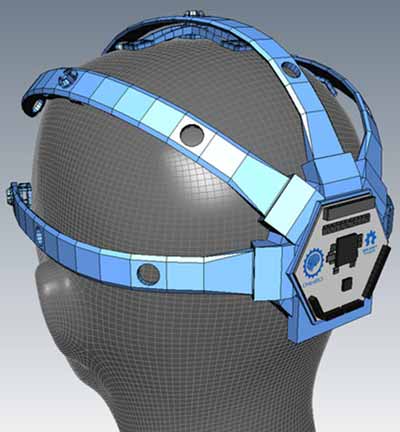

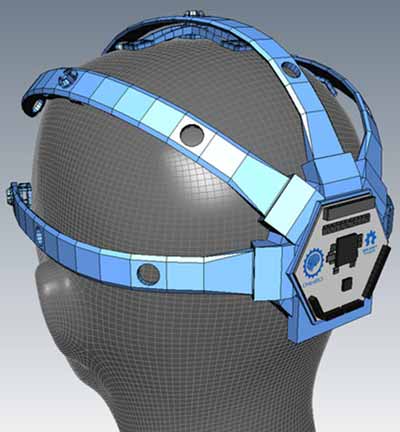

OpenBCI DIY EEG brain-computer-interface Kickstarter ends Jan. 22

January 22, 2014

[+]

The deadline for the OpenBCI Kickstarter,

an open-source EEG brain-computer interface, is Wednesday Jan. 22 at

8:00pm EST. As of post time (Jan. 22 at 3:29 a.m. EST), $194,520 has

been pledged (almost doubling the $100,000 goal), with 866 backers.

OpenBCI

3D-printable EEG headset concept design. Electrodes can be instantly

moved and snapped into any of these sections for detecting EEG signals

from different regions of the cortex. (Credit: OpenBCI)

“If we reach $200K, we will host … and fund … one domestic and one international hackathon in addition to those funded through the hackathon reward levels … in summer 2014,” founders Joel Murphy & Conor Russomanno promise. Just $5,480 to go.

Here’s a quick update since our Dec. 12 KurzweilAI news article:

- OpenBCI has announced a 3D-printable headset that allows for printing the skeleton for flexible placement of EEG (electroencephalogram) electrodes to detect different regions of the cortex — not possible with commercially available EEG headsets.

- LulzBot is donating a TAZ 3 3D printer to OpenBCI and printing supplies. “The LulzBot Taz 3 has one of the largest build volumes and the fastest print speed of any 3D printer on the market, and it can print a wide range of materials,” say the OpenBCI founders.

- OpenBCI is now providing a choice between an Atmel 8-bit Atmega328 core (for novice programmers and people already familiar with the Arduino environment) and a 32-bit PIC core made by Microchip (for faster data rates at higher channel counts — see chipKIT for reference).

- If you support the Kickstarter at the Research Partner reward tier, “you will be invited to an exclusive OpenBCI Research Partner conference hosted in NYC in the late Spring to help us shape the future of the OpenBCI movement. In addition, you will receive 5 OpenBCI boards or daisy-chain modules, an electrode cap identical to the one seen in our video, and an Electrode Starter Kit.”

- OpenBCI announced four research partnerships: France-based Mensia Technologies, a technology start-up focused on the wellness and healthcare applications of real-time, quantitative neurophysiology; Professor Virginia de Sa and the University of California San Diego’s Cognitive Science Department; FamiLAB, an Orlando hackerspace; and The London Quantified Self Meetup Group.

- Melon, an EEG-based Kickstarter that was successfully funded in the Summer of 2013, has sponsored an OpenBCI/Melon joint hackathon to be hosted this coming summer in Los Angeles.

(¯`*• Global Source and/or more resources at http://goo.gl/zvSV7 │ www.Future-Observatory.blogspot.com and on LinkeIn Group's "Becoming Aware of the Futures" at http://goo.gl/8qKBbK │ @SciCzar │ Point of Contact: www.linkedin.com/in/AndresAgostini

Posted by http://goo.gl/JujXk

Dr. Andres Agostini → AMAZON.com/author/agostini → LINKEDIN.com/in/andresagostini → AGO26.blogspot.com → appearoo.com/AAGOSTINI

at

1/22/2014 10:43:00 AM

Tuesday, January 21, 2014

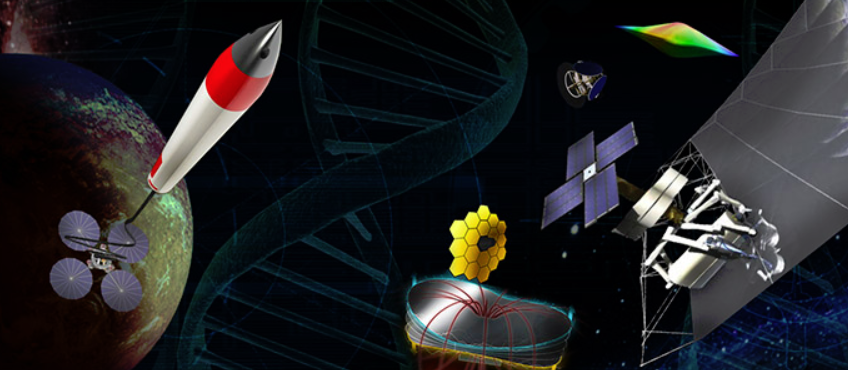

2014 NIAC Symposium

Dates: Feb 4 – 6, 2014

Location: Stanford University, Stanford, California

The

NASA Innovative Advanced Concepts (NIAC) Program is proud to announce

its annual Symposium! All are invited to attend. It will be held at

Stanford University in Stanford, California on February 4–6, 2014.

The

NASA Innovative Advanced Concepts (NIAC) Program is proud to announce

its annual Symposium! All are invited to attend. It will be held at

Stanford University in Stanford, California on February 4–6, 2014.NIAC Symposia are working meetings to review NIAC progress and plans, and discuss ways to make both more successful. It is open to the public because broad participation increases awareness of our studies and significantly adds to the discussions.

[+]

NIAC Symposia feature exciting keynote addresses to inspire the

participants. Past NIAC speakers have included visionary thinkers,

distinguished scientists, senior government officials, authors,

astronauts, and entrepreneurs. Further information on NIAC‘s exciting

progress and future plans will be discussed.

(Credit: NASA)

[+]

The 2014 NIAC Symposium will feature progress presentations from our

new Phase I Fellows and current Phase II Fellows. This portfolio of NIAC

studies address diverse research areas including: Revolutionary

Exploration Systems, Novel Propulsion, Human Systems & Architectures

for Extreme Environments, Sensing, and Imaging.

(Credit: NASA)

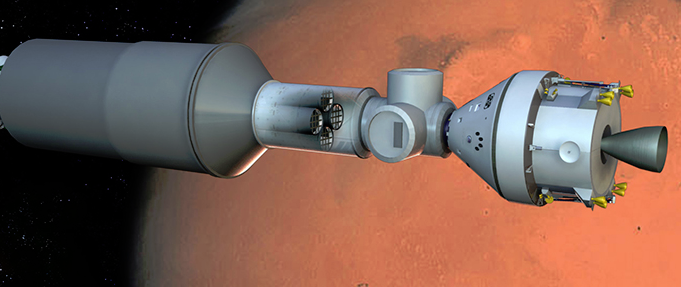

[+]

The NASA Innovative Advanced Concepts (NIAC) seeks innovative,

technically credible advanced concepts that could one day change the

possible in aeronautics and space. The development of revolutionary

aerospace technologies is critical for our nation to meet its goals, to

explore and understand the Earth, our solar system, and the universe.

NIAC efforts will improve the nation’s leadership in key research areas,

enable far-term capabilities, and spawn innovations that make

aeronautics, science, space travel, and exploration more effective,

affordable, and sustainable. NIAC is a component of the Space Technology

Mission Directorate at NASA Headquarters in Washington, DC.

(Credit: NASA)

(¯`*• Global Source and/or more resources at http://goo.gl/zvSV7 │ www.Future-Observatory.blogspot.com and on LinkeIn Group's "Becoming Aware of the Futures" at http://goo.gl/8qKBbK │ @SciCzar │ Point of Contact: www.linkedin.com/in/AndresAgostini

Posted by http://goo.gl/JujXk

Dr. Andres Agostini → AMAZON.com/author/agostini → LINKEDIN.com/in/andresagostini → AGO26.blogspot.com → appearoo.com/AAGOSTINI

at

1/21/2014 09:02:00 AM

Tiny swimming ‘biobots’ propelled by heart cells or magnetic fields

January 21, 2014

[+]

University of Illinois engineers have developed tiny “bio-bot” hybrid

machines that swim like sperm, the first synthetic structures that can

traverse the viscous fluids of biological environments on their own,

according to the engineers.

Synthetic biobots (b) powered by contractile cells are modeled on spermatozoa (a) (credit: Brian J. Williams et al./Nature Communications)

The devices are modeled after single-celled creatures with long tails called flagella — for example, sperm. The researchers begin by creating the body of the bio-bot from a flexible polymer. Then they culture heart cells near the junction of the head and the tail. The cells self-align and synchronize to beat together, sending a wave down the tail that propels the bio-bot forward.

Led by Taher Saif, the Gutgsell Professor of mechanical science and engineering, the team published its work in the journal Nature Communications.

This self-organization is a remarkable emergent phenomenon, Saif said, and how the cells communicate with each other on the flexible polymer tail is yet to be fully understood. But the cells must beat together, in the right direction, for the tail to move.

“It’s the minimal amount of engineering — just a head and a wire,” Saif said. “Then the cells come in, interact with the structure, and make it functional.”

The biobots can swim at 81 microns (millionths of a meter) per second. The team also built two-tailed bots, which they found can swim even faster. The multiple tails design also opens up the possibility of navigation. The researchers envision future bots that could sense chemicals or light and navigate toward a target for medical or environmental applications.

“The long-term vision is simple,” said Saif, who is also part of the Beckman Institute for Advanced Science and Technology at the U. of I. “Could we make elementary structures and seed them with stem cells that would differentiate into smart structures to deliver drugs, perform minimally invasive surgery or target cancer?”

The swimming bio-bot project is part of a larger National Science Foundation-supported Science and Technology Center on Emergent Behaviors in Integrated Cellular Systems, which also produced the walking bio-bots developed at Illinois in 2012.

“The most intriguing aspect of this work is that it demonstrates the capability to use computational modeling in conjunction with biological design to optimize performance, or design entirely different types of swimming bio-bots,” said center director Roger Kamm, a professor of biological and mechanical engineering at MIT. “This opens the field up to a tremendous diversity of possibilities. Truly an exciting advance.” Commercialization will take several years, Saif told KurzweilAI.

What’s remarkable about the design is that a power source and electronics are not required. Swimming microrobots previously reported by KurzweilAI include:

- Are you ready for smart ingestible pills that monitor your health and replace passwords?

- How micron-scale swimming robots could deliver drugs and carry cargo

- The ‘living’ micro-robot that could detect diseases in humans

- Bionic microrobot walks on water — perfect spybot, say Chinese scientists

- Pathogen research inspires microrobotics designs

- One signal controls microrobot swarms

- Microbots made to twist and turn as they swim

Sperm

with a magnetic coating are controlled by a magnetic field. Just before

the sperm reaches the egg, the magnetic coating is ejected. (Credit:

Leibniz Institute for Solid State and Materials Research Dresden)

According to IFW Dresden prof. Oliver Schmidt, the design could also assist in artificial insemination, by guiding the sperm to fertilize eggs in a living organism. “Previous experiments were performed with bovine sperm, but the method is in principle applicable to all mammalian sperm,” said Schmidt, who heads the Institute for Integrative Nanosciences at the IFW Dresden and holds a chair at the Technical University of Chemnitz.

Abstract of Nature Communications paper

Many microorganisms, including spermatozoa and forms of bacteria, oscillate or twist a hair-like flagella to swim. At this small scale, where locomotion is challenged by large viscous drag, organisms must generate time-irreversible deformations of their flagella to produce thrust. To date, there is no demonstration of a self propelled, synthetic flagellar swimmer operating at low Reynolds number. Here we report a microscale, biohybrid swimmer enabled by a unique fabrication process and a supporting slender-body hydrodynamics model. The swimmer consists of a polydimethylsiloxane filament with a short, rigid head and a long, slender tail on which cardiomyocytes are selectively cultured. The cardiomyocytes contract and deform the filament to propel the swimmer at 5–10 μm s−1, consistent with model predictions. We then demonstrate a two-tailed swimmer swimming at 81 μm s−1. This small-scale, elementary biohybrid swimmer can serve as a platform for more complex biological machines.

Abstract of Advanced Materials paper

A fascinating approach to the development of a hybrid micro-bio-robot is presented by Samuel Sanchez, Oliver G. Schmidt and Veronika Magdanz on page 6581. Rolled-up microtubes are used to capture single sperm cells and remotely control them to a desired location. These microbio-robots are useful for the controlled guidance of sperm cells, which is a promising feature towards the development of new assisted-fertilization techniques.

(¯`*• Global Source and/or more resources at http://goo.gl/zvSV7 │ www.Future-Observatory.blogspot.com and on LinkeIn Group's "Becoming Aware of the Futures" at http://goo.gl/8qKBbK │ @SciCzar │ Point of Contact: www.linkedin.com/in/AndresAgostini

Posted by http://goo.gl/JujXk

Dr. Andres Agostini → AMAZON.com/author/agostini → LINKEDIN.com/in/andresagostini → AGO26.blogspot.com → appearoo.com/AAGOSTINI

at

1/21/2014 09:01:00 AM

Marvin Minsky honored for lifetime achievements in artificial intelligence

January 21, 2014

Marvin Minsky (credit: Wikipedia)

The BBVA Foundation cited his influential role in defining the field of artificial intelligence, and in mentoring many of the leading minds in today’s artificial intelligence community. The award also recognizes his contributions to the fields of mathematics, cognitive science, robotics, and philosophy.

In learning of the award, which brings a prize of $540,000, Minsky reconfirmed his conviction that one day we will develop machines that will be as smart as humans. But he added “how long this takes will depend on how many people we have working on the right problems. Right now there is a shortage of both researchers and funding.”

Minsky joined the Department of Electrical Engineering and Computer Science faculty in 1958, and co-founded the Artificial Intelligence Laboratory (now the Computer Science and Artificial Intelligence Laboratory) the following year. In 1985, he became a founding member of the Media Lab, where he was named the Toshiba Professor of Media Arts and Sciences, and where he continues to teach and mentor.

Commenting on the award, Nicholas Negroponte, co-founder and chairman emeritus of the Media Lab, says, “Marvin’s genius is accompanied by extreme kindness and humor. He listens well, and is oracle-like in his capability to debug an enormously complex situation with a simple, short phrase. Through the 47 years we have known each other, he has taught me to tackle the big problems.”

Patrick Winston, the Ford Professor of Artificial Intelligence and Computer Science and former director of MIT’s Artificial Intelligence Lab, says, “One day, when I was wondering what I wanted to do, I went to one of Marvin’s lectures, invited by a friend. At the end, I said to myself, I want to do what he does. And ever since, that is what I have done.”

Minsky views the brain as a machine whose functioning can be studied and replicated in a computer, which would teach us, in turn, to better understand the human brain and higher-level mental functions. He has been recognized for his work focusing on how we might endow machines with common sense — the knowledge humans acquire every day through experience. How, for example, do we teach a sophisticated computer that to drag an object on a string, you need to pull not push — a concept easily mastered by a two-year-old child.

Minsky’s book, The Society of Mind (1985) is considered the seminal work on exploring intellectual structure and function, and for understanding the diversity of the mechanisms interacting in intelligence and thought. Other achievements include building the first neural network simulator (SNARC), as well as mechanical hands and other robotic devices. Minsky is the inventor of the earliest confocal scanning microscope. He was also involved in the inventions of the first “turtle,” or cursor, for the LOGO programming language (with Seymour Papert), and the “Muse” synthesizer for musical variations (with Ed Fredkin). His most recent book, The Emotion Machine: Commonsense Thinking, Artificial Intelligence, and the Future of the Human Mind, was published in 2006.

Minsky has received numerous awards, among them the ACM Turing Award, the Japan Prize, the Royal Society of Medicine Rank Prize (for Optoelectronics) and the Optical Society (OSA) R.W. Wood Prize.

Minsky graduated from Harvard University in 1950, and received his PhD from Princeton University in 1954. He was appointed a Harvard University Junior Fellow from 1954 to 1957.

The BBVA Foundation was established by the BBVA Group, a global financial service group based in Spain. The Frontiers of Knowledge Awards, established in 2008, honor achievements in the arts, science, and technology. They focus on contributions of lasting impact for their originality, theoretical significance and ability to push back the frontiers of the known world.

(¯`*• Global Source and/or more resources at http://goo.gl/zvSV7 │ www.Future-Observatory.blogspot.com and on LinkeIn Group's "Becoming Aware of the Futures" at http://goo.gl/8qKBbK │ @SciCzar │ Point of Contact: www.linkedin.com/in/AndresAgostini

Posted by http://goo.gl/JujXk

Dr. Andres Agostini → AMAZON.com/author/agostini → LINKEDIN.com/in/andresagostini → AGO26.blogspot.com → appearoo.com/AAGOSTINI

at

1/21/2014 09:00:00 AM

Monday, January 20, 2014

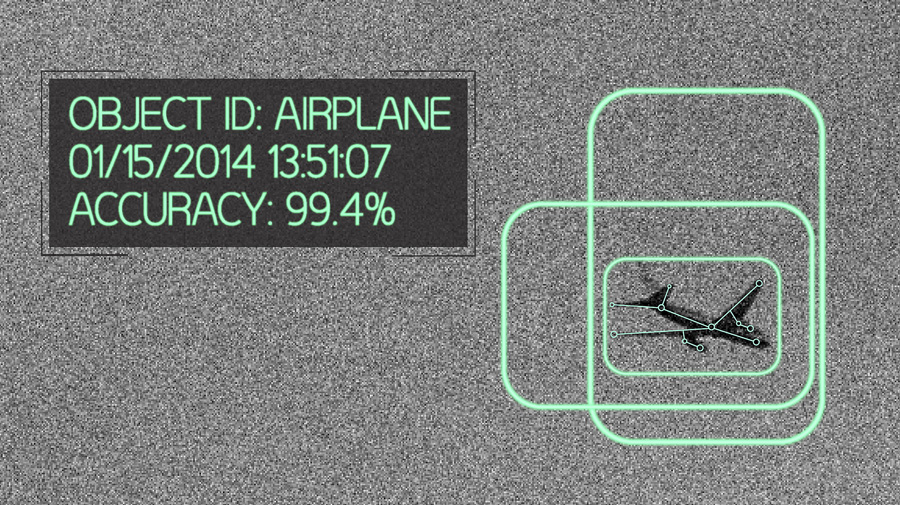

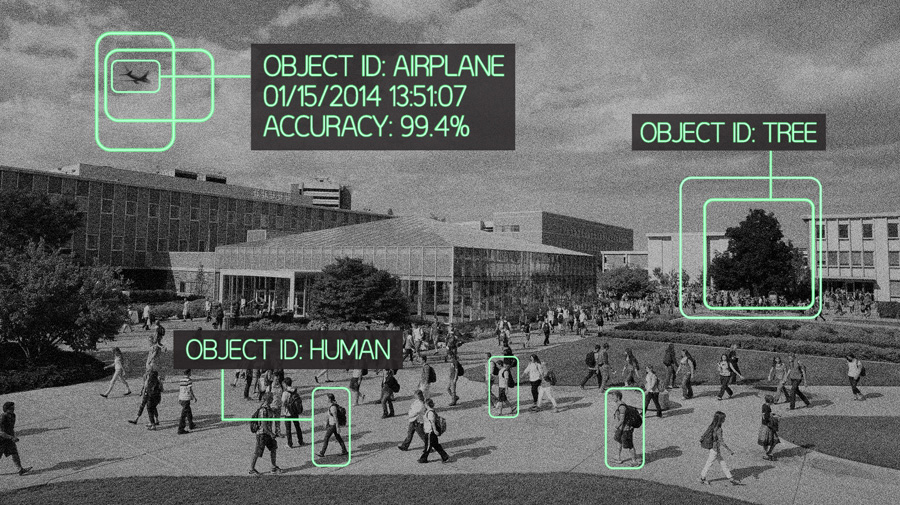

A smart-object recognition algorithm that doesn’t need humans

Skynet alert

January 17, 2014

(Credit: BYU Photo)

“In most cases, people are in charge of deciding what features to focus on and they then write the algorithm based off that,” said Lee, a professor of electrical and computer engineering. “With our algorithm, we give it a set of images and let the computer decide which features are important.”

Humans need not apply

Not only is Lee’s genetic algorithm able to set its own parameters, but it also doesn’t need to be reset each time a new object is to be recognized — it learns them on its own.

Lee likens the idea to teaching a child the difference between dogs and cats. Instead of trying to explain the difference, we show children images of the animals and they learn on their own to distinguish the two. Lee’s object recognition does the same thing: Instead of telling the computer what to look at to distinguish between two objects, they simply feed it a set of images and it learns on its own.

(Credit: BYU Photo)

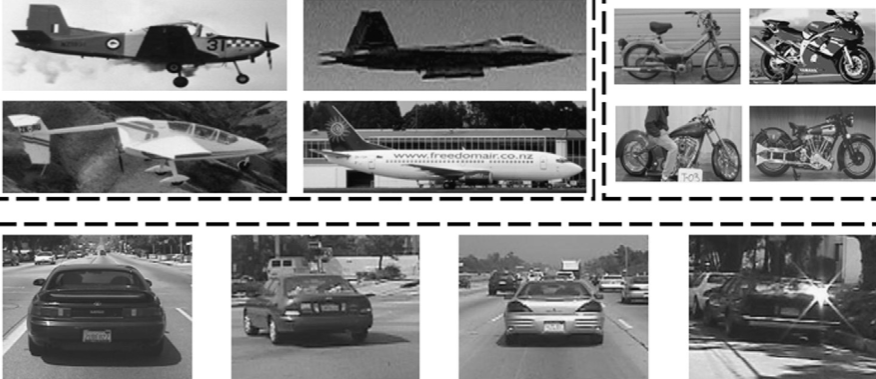

In a study published in the December issue of academic journal Pattern Recognition, Lee and his students demonstrate both the independent ability and accuracy of their “ECO features” genetic algorithm.

The BYU algorithm tested as well or better than other top object recognition algorithms to be published, including those developed by NYU’s Rob Fergus and Thomas Serre of Brown University.

[+]

Lee and his students fed their object recognition program four image

datasets from CalTech (motorbikes, faces, airplanes and cars) and found

100 percent accurate recognition on every dataset. The other published

well-performing object recognition systems scored in the 95–98% range.

Example images from the Caltech image datasets after being scaled and having color removed (credit: BYU Photo)

The team has also tested their algorithm on a dataset of fish images from BYU’s biology department that included photos of four species. The algorithm was able to distinguish between the species with 99.4% accuracy.

Lee said the results show the algorithm could be used for a number of applications, from detecting invasive fish species to identifying flaws in produce such as apples on a production line. More interesting applications might be surveillance and robot vision systems.

“It’s very comparable to other object recognition algorithms for accuracy, but, we don’t need humans to be involved,” Lee said. “You don’t have to reinvent the wheel each time. You just run it.”

Abstract of Pattern Recognition paper

This paper presents a novel approach for object detection using a feature construction method called Evolution-COnstructed (ECO) features. Most other object recognition approaches rely on human experts to construct features. ECO features are automatically constructed by uniquely employing a standard genetic algorithm to discover series of transforms that are highly discriminative. Using ECO features provides several advantages over other object detection algorithms including: no need for a human expert to build feature sets or tune their parameters, ability to generate specialized feature sets for different objects, and no limitations to certain types of image sources. We show in our experiments that ECO features perform better or comparable with hand-crafted state-of-the-art object recognition algorithms. An analysis is given of ECO features which includes a visualization of ECO features and improvements made to the algorithm.

(¯`*• Global Source and/or more resources at http://goo.gl/zvSV7 │ www.Future-Observatory.blogspot.com and on LinkeIn Group's "Becoming Aware of the Futures" at http://goo.gl/8qKBbK │ @SciCzar │ Point of Contact: www.linkedin.com/in/AndresAgostini

Posted by http://goo.gl/JujXk

Dr. Andres Agostini → AMAZON.com/author/agostini → LINKEDIN.com/in/andresagostini → AGO26.blogspot.com → appearoo.com/AAGOSTINI

at

1/20/2014 06:58:00 PM

How Internet surveillance predicts disease outbreak before WHO

Creating your own disease surveillance maps

January 20, 2014

[+]

Have you ever Googled for an online diagnosis before visiting a

doctor? If so, you may have helped provide early warning of an

infectious disease epidemic.

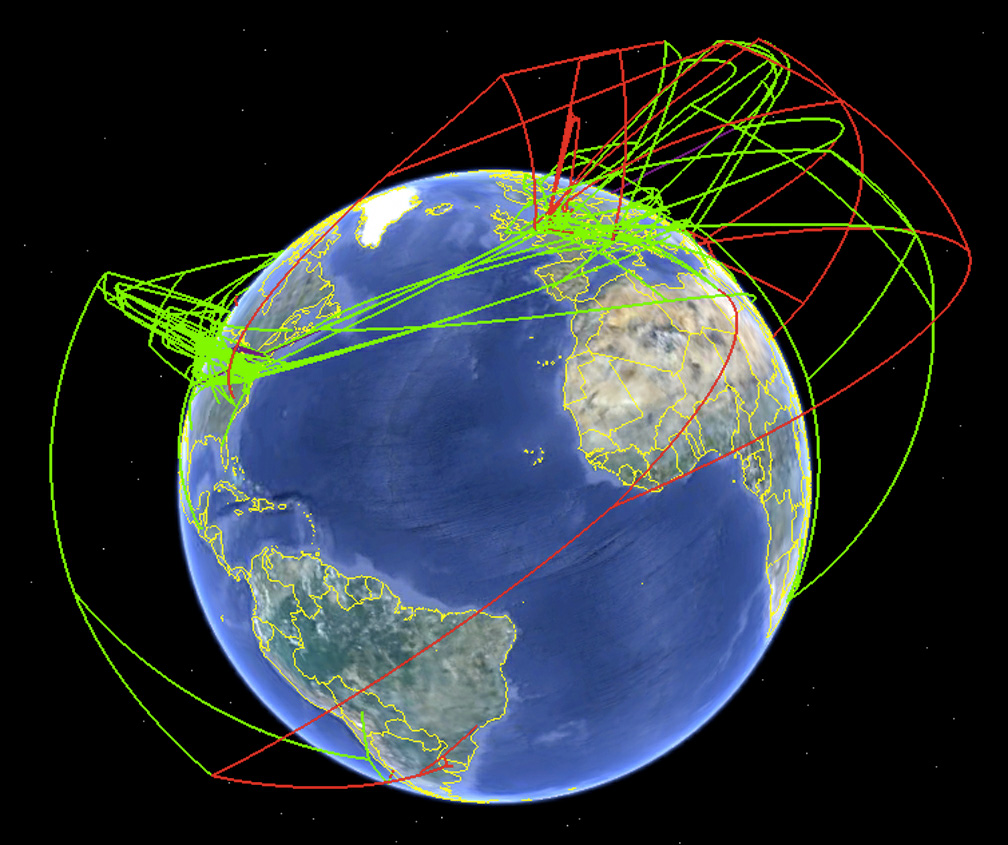

Screenshot

of the spread of H7 influenza as produced by Supramap and visualized by

Google Earth in 2009. This view illustrates the historical spread of

high pathogenic lineages (high-altitude red lines) and the local

evolution of high pathogenicity (low-altitude red lines). (Credit:

Janies/OSU)

In a new study published in Lancet Infectious Diseases, Internet-based surveillance has been found to detect infectious diseases such as Dengue Fever and Influenza up to two weeks earlier than traditional surveillance methods, according to Queensland University of Technology (QUT) research fellow and senior author of the paper Wenbiao Hu.

Hu, based at the Institute for Health and Biomedical Innovation, said there was often a lag time of two weeks before traditional surveillance methods could detect an emerging infectious disease.

“This is because traditional surveillance relies on the patient recognizing the symptoms and seeking treatment before diagnosis, along with the time taken for health professionals to alert authorities through their health networks. In contrast, digital surveillance can provide real-time detection of epidemics.”

Hu said the study used search engine algorithms such as Google Trends and Google Insights. It found that detecting the 2005–06 avian influenza outbreak “Bird Flu” would have been possible between one and two weeks earlier than official surveillance reports.

“In another example, a digital data collection network was found to be able to detect the SARS outbreak more than two months before the first publications by the World Health Organization (WHO),” Hu said.

[+]

“Early detection means early warning and that can help reduce or

contain an epidemic, as well alert public health authorities to ensure

risk management strategies such as the provision of adequate medication

are implemented.”

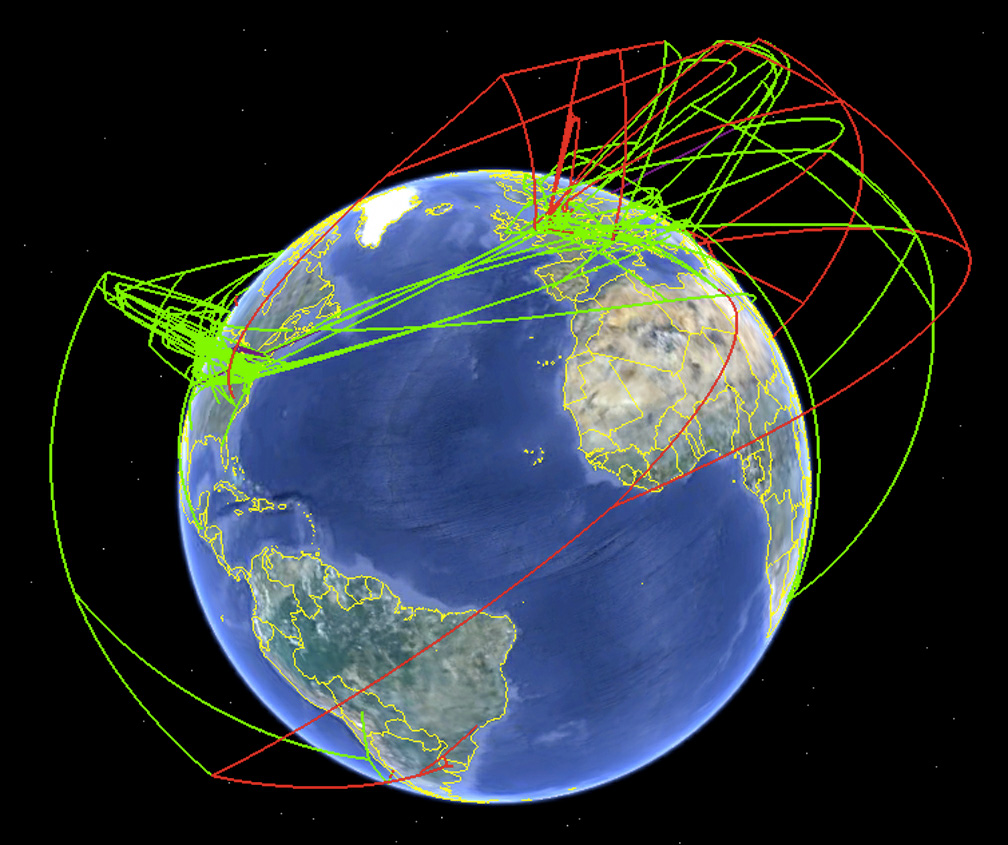

According

to this week’s CDC FluView report published Jan. 17, 2014, influenza

activity in the United States remains high overall, with 3,745

laboratory-confirmed influenza-associated hospitalizations reported since October 1, 2013 (credit: CDC)

Hu said the study found that social media including Twitter and Facebook and microblogs could also be effective in detecting disease outbreaks. “The next step would be to combine the approaches currently available such as social media, aggregator websites, and search engines, along with other factors such as climate and temperature, and develop a real-time infectious disease predictor.”

“The international nature of emerging infectious diseases combined with the globalization of travel and trade, have increased the interconnectedness of all countries and that means detecting, monitoring and controlling these diseases is a global concern.”

The other authors of the paper were Gabriel Milinovich (first author), Gail Williams and Archie Clements from the University of Queensland School of Population, Health and State.

Supramap

Another powerful tool is Supramap, a web application that synthesizes large, diverse datasets so that researchers can better understand the spread of infectious diseases across hosts and geography by integrating genetic, evolutionary, geospatial, and temporal data. It is now open-source — create your own maps here.

Tracking Swine Flu: Daniel Janies, former

associate professor of Biomedical Informatics at The Ohio State

University, shows how he used Google Earth to track the H1N1 flu virus.

(2009)

It was originally developed in 2007 to track the spread and evolution of pandemic (H1N1) and avian influenza (H5N1).

“Using SUPRAMAP, we initially developed maps that illustrated the spread of drug-resistant influenza and host shifts in H1N1 and H5N1 influenza and in coronaviruses, such as SARS,” said Janies. “SUPRAMAP allows the user to track strains carrying key mutations in a geospatial browser such as Google Earth. Our software allows public health scientists to update and view maps on the evolution and spread of pathogens.”

Grant funding through the U.S. Army Research Laboratory and Office supports this Innovation Group on Global Infectious Disease Research project. Support for the computational requirements of the project comes from the American Museum of Natural History (AMNH) and OSC. Ohio State’s Wexner Medical Center, Department of Biomedical Informatics and offices of Academic Affairs and Research provide additional support.

Abstract of The Lancet Infectious Diseases paper

Emerging infectious diseases present a complex challenge to public health officials and governments; these challenges have been compounded by rapidly shifting patterns of human behaviour and globalisation. The increase in emerging infectious diseases has led to calls for new technologies and approaches for detection, tracking, reporting, and response. Internet-based surveillance systems offer a novel and developing means of monitoring conditions of public health concern, including emerging infectious diseases. We review studies that have exploited internet use and search trends to monitor two such diseases: influenza and dengue. Internet-based surveillance systems have good congruence with traditional surveillance approaches. Additionally, internet-based approaches are logistically and economically appealing. However, they do not have the capacity to replace traditional surveillance systems; they should not be viewed as an alternative, but rather an extension. Future research should focus on using data generated through internet-based surveillance and response systems to bolster the capacity of traditional surveillance systems for emerging infectious diseases.

Abstract of The Supramap project: linking pathogen genomes with geography to fight emergent infectious diseases

Novel pathogens have the potential to become critical issues of national security, public health and economic welfare. As demonstrated by the response to Severe Acute Respiratory Syndrome (SARS) and influenza, genomic sequencing has become an important method for diagnosing agents of infectious disease. Despite the value of genomic sequences in characterizing novel pathogens, raw data on their own do not provide the information needed by public health officials and researchers. One must integrate knowledge of the genomes of pathogens with host biology and geography to understand the etiology of epidemics. To these ends, we have created an application called Supramap (http://supramap.osu.edu) to put information on the spread of pathogens and key mutations across time, space and various hosts into a geographic information system (GIS). To build this application, we created a web service for integrated sequence alignment and phylogenetic analysis as well as methods to describe the tree, mutations, and host shifts in Keyhole Markup Language (KML). We apply the application to 239 sequences of the polymerase basic 2 (PB2) gene of recent isolates of avian influenza (H5N1). We map a mutation, glutamic acid to lysine at position 627 in the PB2 protein (E627K), in H5N1 influenza that allows for increased replication of the virus in mammals. We use a statistical test to support the hypothesis of a correlation of E627K mutations with avian-mammalian host shifts but reject the hypothesis that lineages with E627K are moving westward. Data, instructions for use, and visualizations are included as supplemental materials at: http://supramap.osu.edu/sm/supramap/publications.

(¯`*• Global Source and/or more resources at http://goo.gl/zvSV7 │ www.Future-Observatory.blogspot.com and on LinkeIn Group's "Becoming Aware of the Futures" at http://goo.gl/8qKBbK │ @SciCzar │ Point of Contact: www.linkedin.com/in/AndresAgostini

Posted by http://goo.gl/JujXk

Dr. Andres Agostini → AMAZON.com/author/agostini → LINKEDIN.com/in/andresagostini → AGO26.blogspot.com → appearoo.com/AAGOSTINI

at

1/20/2014 06:58:00 PM

Cosmic web imaged for the first time

January 20, 2014

This

deep image shows the nebula (cyan) extending across 2 million

light-years that was discovered around the bright quasar UM287 (at the

center of the nebula). The energetic radiation of the quasar makes the

surrounding intergalactic gas glow, revealing the morphology and

physical properties of a cosmic web filament. The image was obtained at

the W. M. Keck Observatory. (Credit: S. Cantalupo, UCSC)

Researchers at the University of California, Santa Cruz led the study, published January 19 in Nature.

Using the 10-meter Keck I Telescope at the W. M. Keck Observatory in Hawaii, the researchers detected a very large, luminous nebula of gas extending about 2 million light-years across intergalactic space.

“This is a very exceptional object: it’s huge, at least twice as large as any nebula detected before, and it extends well beyond the galactic environment of the quasar,” said first author Sebastiano Cantalupo, a postdoctoral fellow at UC Santa Cruz.

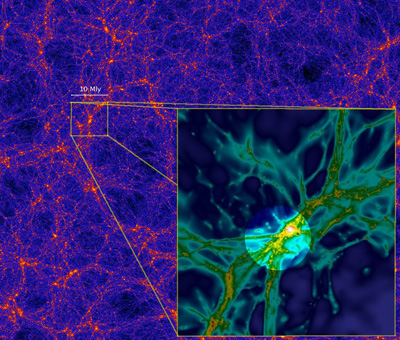

The cosmic web of matter

Computer

simulations suggest that matter in the universe is distributed in a

“cosmic web” of filaments, as seen in the image above from a large-scale

dark-matter simulation (the Bolshoi simulation, by Anatoly Klypin and

Joel Primack). The inset is a zoomed-in, high-resolution image of a

smaller part of the cosmic web, 10 million light-years across, from a

simulation that includes gas as well as dark matter (credit: S.

Cantalupo). The intense radiation from a quasar can, like a flashlight,

illuminate part of the surrounding cosmic web (highlighted in the image)

and make a filament of gas glow, as was observed in the case of quasar

UM287. (Credit: Anatoly Klypin, Joel Primack, S. Cantalupo)

This web is seen in the results from computer simulations of the evolution of structure in the universe, which show the distribution of dark matter on large scales, including the dark matter halos in which galaxies form and the cosmic web of filaments that connect them. Gravity causes ordinary matter to follow the distribution of dark matter, so filaments of diffuse, ionized gas are expected to trace a pattern similar to that seen in dark matter simulations.

Until now, however, these filaments have never been seen. Intergalactic gas has been detected by its absorption of light from bright background sources, but those results don’t reveal how the gas is distributed. In this study, the researchers detected the fluorescent glow of hydrogen gas resulting from its illumination by intense radiation from the quasar.

“This quasar is illuminating diffuse gas on scales well beyond any we’ve seen before, giving us the first picture of extended gas between galaxies. It provides a terrific insight into the overall structure of our universe,” said coauthor J. Xavier Prochaska, professor of astronomy and astrophysics at UC Santa Cruz.

The hydrogen gas illuminated by the quasar emits ultraviolet light known as Lyman alpha radiation. The distance to the quasar is so great (about 10 billion light-years) that the emitted light is “stretched” by the expansion of the universe from an invisible ultraviolet wavelength to a visible shade of violet by the time it reaches the Keck Telescope.

Knowing the distance to the quasar, the researchers calculated the wavelength for Lyman alpha radiation from that distance (based on the doppler frequency shift — similar to the decreasing pitch of a train whistle as it travels away from you) and built a special filter for the telescope’s LRIS spectrometer to get an image at that wavelength.

“We have studied other quasars this way without detecting such extended gas,” Cantalupo said. “The light from the quasar is like a flashlight beam, and in this case we were lucky that the flashlight is pointing toward the nebula and making the gas glow. We think this is part of a filament that may be even more extended than this, but we only see the part of the filament that is illuminated by the beamed emission from the quasar.”

Dark galaxies

A quasar is a type of active galactic nucleus that emits intense radiation powered by a supermassive black hole at the center of the galaxy. In an earlier survey of distant quasars using the same technique to look for glowing gas, Cantalupo and others detected “dark galaxies,” the densest knots of gas in the cosmic web. These dark galaxies are thought to be either too small or too young to have formed stars.

“The dark galaxies are much denser and smaller parts of the cosmic web. In this new image, we also see dark galaxies, in addition to the much more diffuse and extended nebula,” Cantalupo said. “Some of this gas will fall into galaxies, but most of it will remain diffuse and never form stars.”

The researchers estimated the amount of gas in the nebula to be at least ten times more than expected from the results of computer simulations. “We think there may be more gas contained in small dense clumps within the cosmic web than is seen in our models. These observations are challenging our understanding of intergalactic gas and giving us a new laboratory to test and refine our models,” Cantalupo said.

In addition to Cantalupo and Prochaska, the coauthors of the paper include Piero Madau, professor of astronomy and astrophysics at UC Santa Cruz, and Fabrizio Arrigoni-Battaia and Joseph Hennawi at the Max Planck Institute for Astronomy in Heidelberg, Germany.

This research was supported by grants from the National Science Foundation and NASA.

Abstract of Nature paper

Simulations of structure formation in the Universe predict that galaxies are embedded in a ‘cosmic web’1, where most baryons reside as rarefied and highly ionized gas. This material has been studied for decades in absorption against background sources, but the sparseness of these inherently one-dimensional probes preclude direct constraints on the three-dimensional morphology of the underlying web. Here we report observations of a cosmic web filament in Lyman-α emission, discovered during a survey for cosmic gas fluorescently illuminated by bright quasars at redshift z ≈ 2.3. With a linear projected size of approximately 460 physical kiloparsecs, the Lyman-α emission surrounding the radio-quiet quasar UM 287 extends well beyond the virial radius of any plausible associated dark-matter halo and therefore traces intergalactic gas. The estimated cold gas mass of the filament from the observed emission—about 1012.0 ± 0.5/C1/2 solar masses, where C is the gas clumping factor—is more than ten times larger than what is typically found in cosmological simulations, suggesting that a population of intergalactic gas clumps with subkiloparsec sizes may be missing in current numerical models.

References:

(¯`*• Global Source and/or more resources at http://goo.gl/zvSV7 │ www.Future-Observatory.blogspot.com and on LinkeIn Group's "Becoming Aware of the Futures" at http://goo.gl/8qKBbK │ @SciCzar │ Point of Contact: www.linkedin.com/in/AndresAgostini

Posted by http://goo.gl/JujXk

Dr. Andres Agostini → AMAZON.com/author/agostini → LINKEDIN.com/in/andresagostini → AGO26.blogspot.com → appearoo.com/AAGOSTINI

at

1/20/2014 06:57:00 PM

Subscribe to:

Posts (Atom)

Washington

Washington